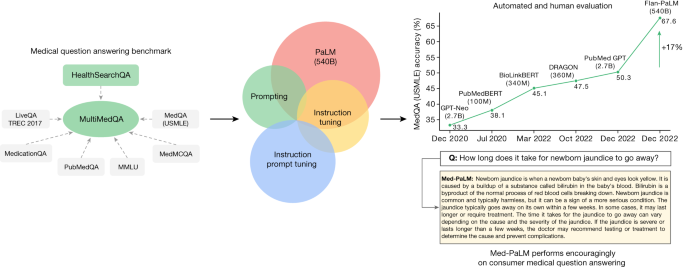

Large language models (LLMs) have demonstrated impressive capabilities, but the bar for clinical applications is high. Attempts to assess the clinical knowledge of models typically rely on automated evaluations based on limited benchmarks. Here, to address these limitations, we present MultiMedQA, a benchmark combining six existing medical question answering datasets spanning professional medicine, research and consumer queries and a new dataset of medical questions searched online, HealthSearchQA. We propose a human evaluation framework for model answers along multiple axes including factuality, comprehension, reasoning, possible harm and bias. In addition, we evaluate Pathways Language Model1 (PaLM, a 540-billion parameter LLM) and its instruction-tuned variant, Flan-PaLM2 on MultiMedQA. Using a combination of prompting strategies, Flan-PaLM achieves state-of-the-art accuracy on every MultiMedQA multiple-choice dataset (MedQA3, MedMCQA4, PubMedQA5 and Measuring Massive Multitask Language Understanding (MMLU) clinical topics6), including 67.6% accuracy on MedQA (US Medical Licensing Exam-style questions), surpassing the prior state of the art by more than 17%. However, human evaluation reveals key gaps. To resolve this, we introduce instruction prompt tuning, a parameter-efficient approach for aligning LLMs to new domains using a few exemplars. The resulting model, Med-PaLM, performs encouragingly, but remains inferior to clinicians. We show that comprehension, knowledge recall and reasoning improve with model scale and instruction prompt tuning, suggesting the potential utility of LLMs in medicine. Our human evaluations reveal limitations of today’s models, reinforcing the importance of both evaluation frameworks and method development in creating safe, helpful LLMs for clinical applications. Med-PaLM, a state-of-the-art large language model for medicine, is introduced and evaluated across several medical question answering tasks, demonstrating the promise of these models in this domain.

Related Keywords

New York , United States , White House , District Of Columbia , South Korea , American , Han , Ben Abacha , Public Health , Governance Of Artificial Intelligence For Health , Peril National Academy Of Medicine , Health Technology , Artificial Intelligence In Health Care , Guidance World Health Organization , White House Office Of Science , Proceedings Of Machine Learning Research , C Development , Conference On Health , New York Times , Stanford University , Meeting Of The Association For Computational Linguistics , Association Of Computational Machinery , Association For Computational Linguistics , International Conference On Machine , Association For Computing Machinery , Annual Meeting , Computational Linguistics , Computational Machinery , Machine Learning Research , Patient Saf , Data Sci , Computing Machinery , Health Care , National Academy , White House Office , Making Automated Systems Work , American People , Artificial Intelligence , World Health Organization , International Conference , Machine Learning , Neural Information Processing Systems , Clinical Natural Language Processing Workshop ,

comparemela.com © 2020. All Rights Reserved.